[Weekly Retro] Words do the locking

#277 - Apr.2026

Happy Friday!

💡 Here's a quick idea before you head off to the weekend:

I've watched many teams spend months building an answer to the wrong question.

It's not because they were careless. It is often because they skipped the critical step of agreeing on what the real problem to solve was.

In most cases, the critical moment isn't the build. In the software world, building is becoming the easiest part today. The critical moment is now those few minutes when someone names the problem for the first time. That's where the wrong frame gets locked in.

Language does the locking. Words matter.

Call it a "dashboard" and the solution space converges instantly. Call it "people can't see what matters" and it opens up into a space of possibilities.

Name the real problem first, clearly. Then build it.

📖 Article I've been thinking about

Why Are We Still Doing This? This is a good reflection on how much of our AI usage is driven by habit and hype rather than genuine need and how we ignore the real cost behind it.

✉️ Posts worth revisiting

I wrote about this pattern of problem framing a while back. When teams build solutions before they've diagnosed the real pain. The signals are subtle but consistent:

This is another short piece on how the questions you ask at the start of a project shape everything that follows. The right questions unlock the right problems:

🧪 Tools I'm experimenting with

I continue to experiment with Claude Code. Last time I posted about how I'm turning Claude Code + Obsidian into a powerhouse of knowledge base. I've been creating multiple skills for this. But if this wasn't good enough I found this: AutoResearch.

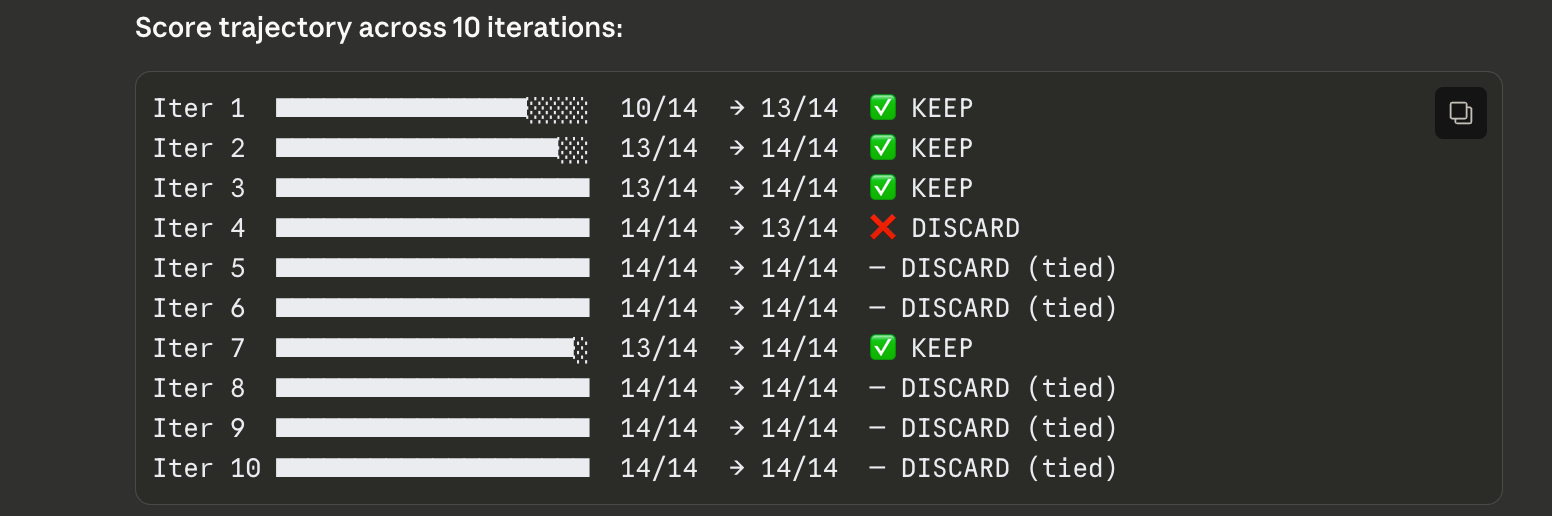

This Github Repo was created by Andre Karpathy and it basically offers an agent-driven reusable framework for running experiments (research) for improving a model (or a prompt in my case). In Claude Skill terms, this means you can optimize a skill automatically by providing the outcomes that you expect. Each iteration, it runs an experiment, check the results over these conditions (evals), and apply improvements.

I ran it in one of my skills which was scoring 71% accuracy, and it took it to 100% in a few iterations. Imagine a future where you have business processes automated through skills: this is basically your continuous improvement department, iterating in real-time!

Aren't these amazing times!?

🖋️ Quote of the week

"A problem well stated is a problem half-solved." — Charles Kettering